Load Balancers

Overview

Load Balancers distribute incoming traffic across multiple backend instances, providing high availability and horizontal scalability for applications. Each load balancer is a dedicated managed instance with full configuration control through port-based configuration blocks, SSL certificates, health checks, and backend node management.

Two deployment modes are supported:

- VPC Mode -- Deploy within a VPC for private network traffic distribution between VPC-connected instances

- Public Mode -- Deploy with a public IP for direct internet-facing load balancing across instances with public IPs

Admin Configuration

Enabling Load Balancers

Before users can deploy load balancers, an administrator must enable and configure the feature on the desired hypervisor group.

- Navigate to Compute → Hypervisor Groups

- Select the hypervisor group where you want to enable load balancers

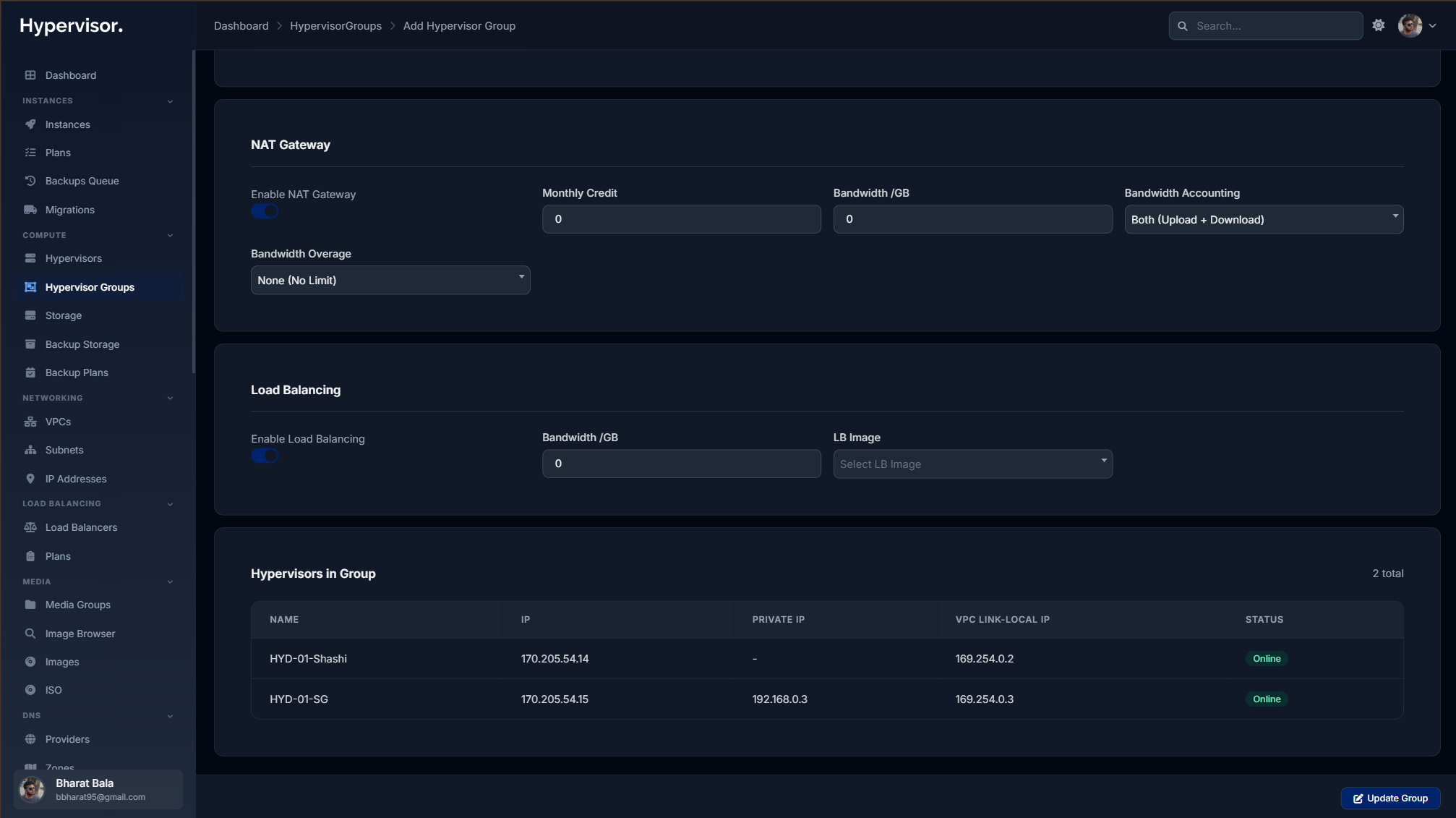

- In the Load Balancer section, configure:

- LB Enabled -- Toggle to enable load balancer deployments for this location

- LB Image -- Select the base OS image for load balancers (must have purpose set to

load_balancer)

Screenshot: Admin panel > Hypervisor Groups > Edit > Load Balancer section showing the LB Enabled toggle and LB Image dropdown

Load balancer images must be created with the load_balancer purpose. Only images marked with this purpose will appear in the dropdown. Set the purpose when creating or editing an image under Media → Images.

Creating LB Plans

LB Plans define the resource allocation for load balancer instances (CPU, RAM, storage, network).

- Navigate to Load Balancers → Plans in the admin sidebar

- Click Create Plan

- Configure the plan:

- Name - Display name for the plan (e.g., "LB Small", "LB Large")

- CPU / RAM / Storage - Resource allocation for the load balancer

- Network Settings - NIC type, bandwidth limits, inbound/outbound averages

- Storage Settings - Storage type, I/O mode, read/write limits

- CPU Topology - Sockets, cores, threads, CPU mode and model

Screenshot: Admin panel > Load Balancers > Plans page showing the plan creation modal with all resource configuration sections

Deploying a Load Balancer (Admin)

Admins can deploy load balancers on behalf of any user.

- Navigate to Load Balancers → Load Balancers in the admin sidebar

- Click Create Load Balancer

- Select the User who will own this load balancer

- Choose Deploy Mode:

- VPC - Select a VPC and subnet (load balancer gets a VPC IP)

- Public - Select a location/hypervisor group (load balancer gets a public IP)

- Select the LB Plan

- Enter a Name for the load balancer

- Click Create

Screenshot: Admin panel > Load Balancers > Create modal showing user selection, deploy mode toggle (VPC/Public), and plan selection

The system will provision the load balancer and display it in the list. Deployment typically takes 1--2 minutes.

User Guide

Creating a Load Balancer

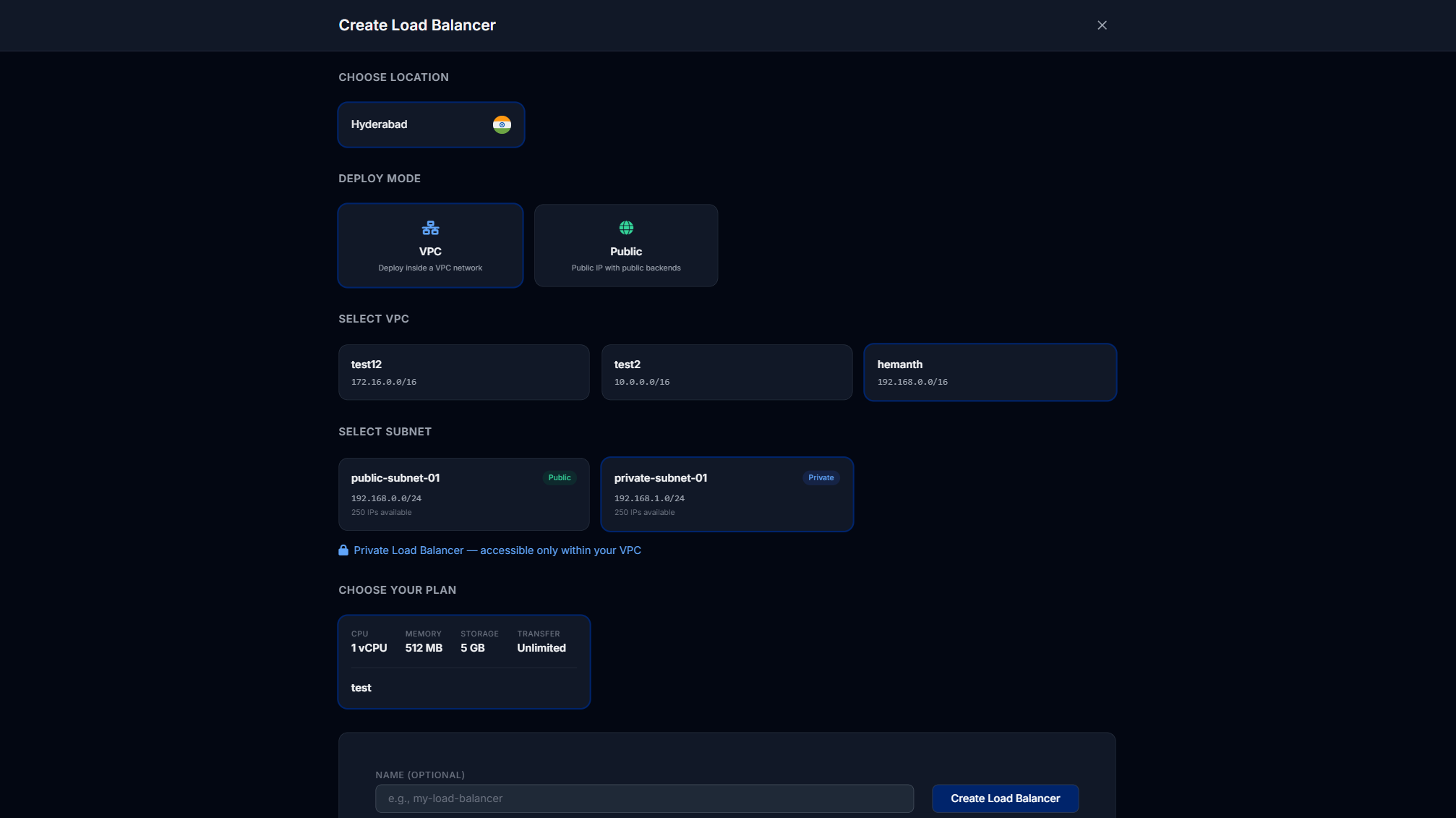

- Navigate to Load Balancers in the user sidebar

- Click Create Load Balancer

- Select a Location (hypervisor group with LB enabled)

- Choose Deploy Mode:

- VPC - Requires an existing VPC in the selected location. Select VPC and subnet.

- Public - No VPC required. The load balancer gets a public IP directly.

- Select a Plan

- Enter a Name

- Click Create

Screenshot: User panel > Load Balancers > Create page showing location cards, deploy mode selection (VPC/Public toggle), plan selection, and name input

Choose VPC mode when your backend instances are all within the same VPC and you want private network load balancing. Choose Public mode when you want a simple public-facing load balancer that distributes traffic to instances with public IPs.

Managing a Load Balancer

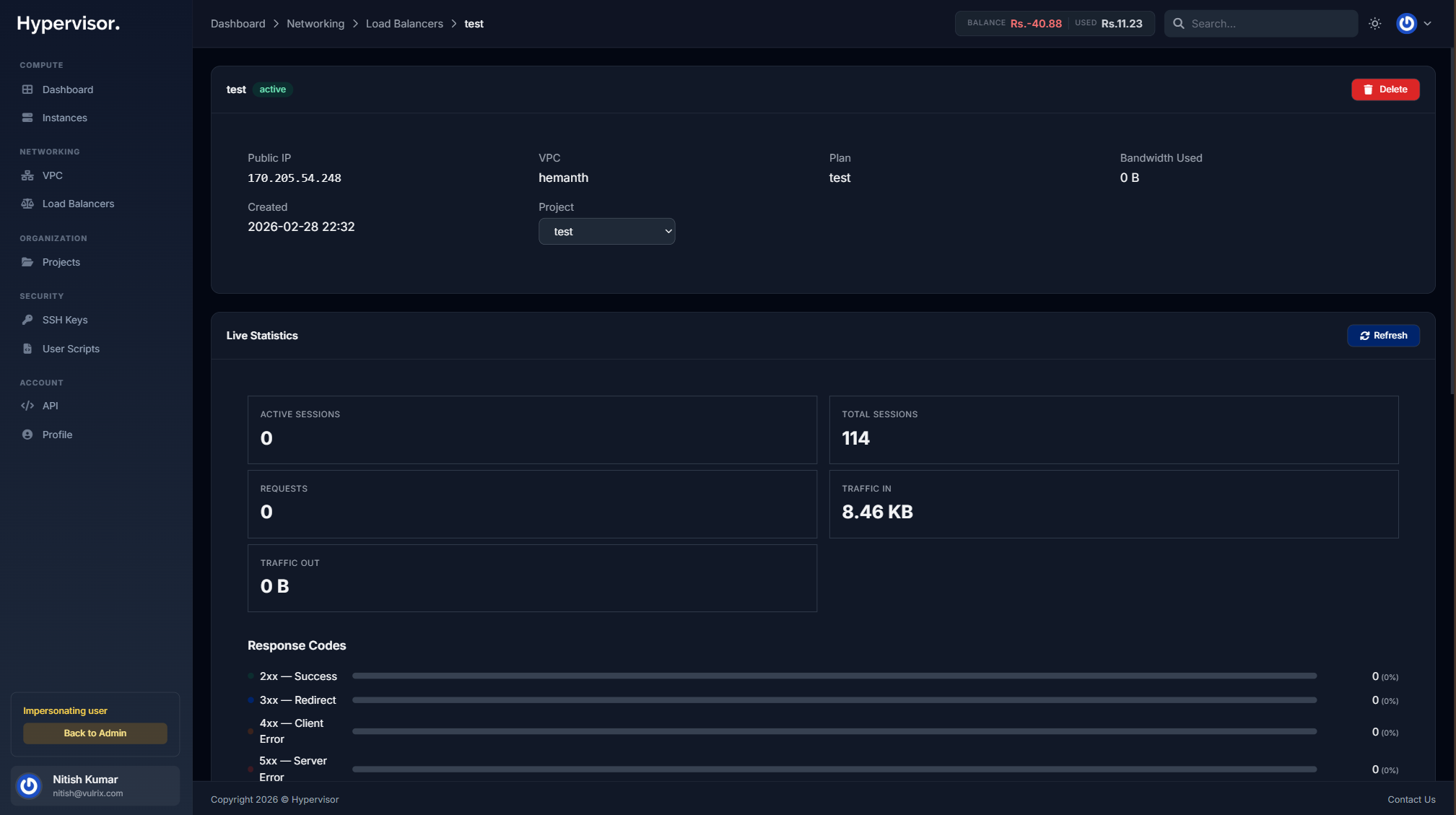

After deployment, click on a load balancer to access its management page.

Screenshot: User panel > Load Balancers > Show page showing the overview with status, IP addresses, and resource usage

Configuration

Load balancer configuration is organized as configuration blocks, where each block represents a listening port with its own protocol, algorithm, health checks, and backend nodes. This unified approach keeps all settings for a given port in one place.

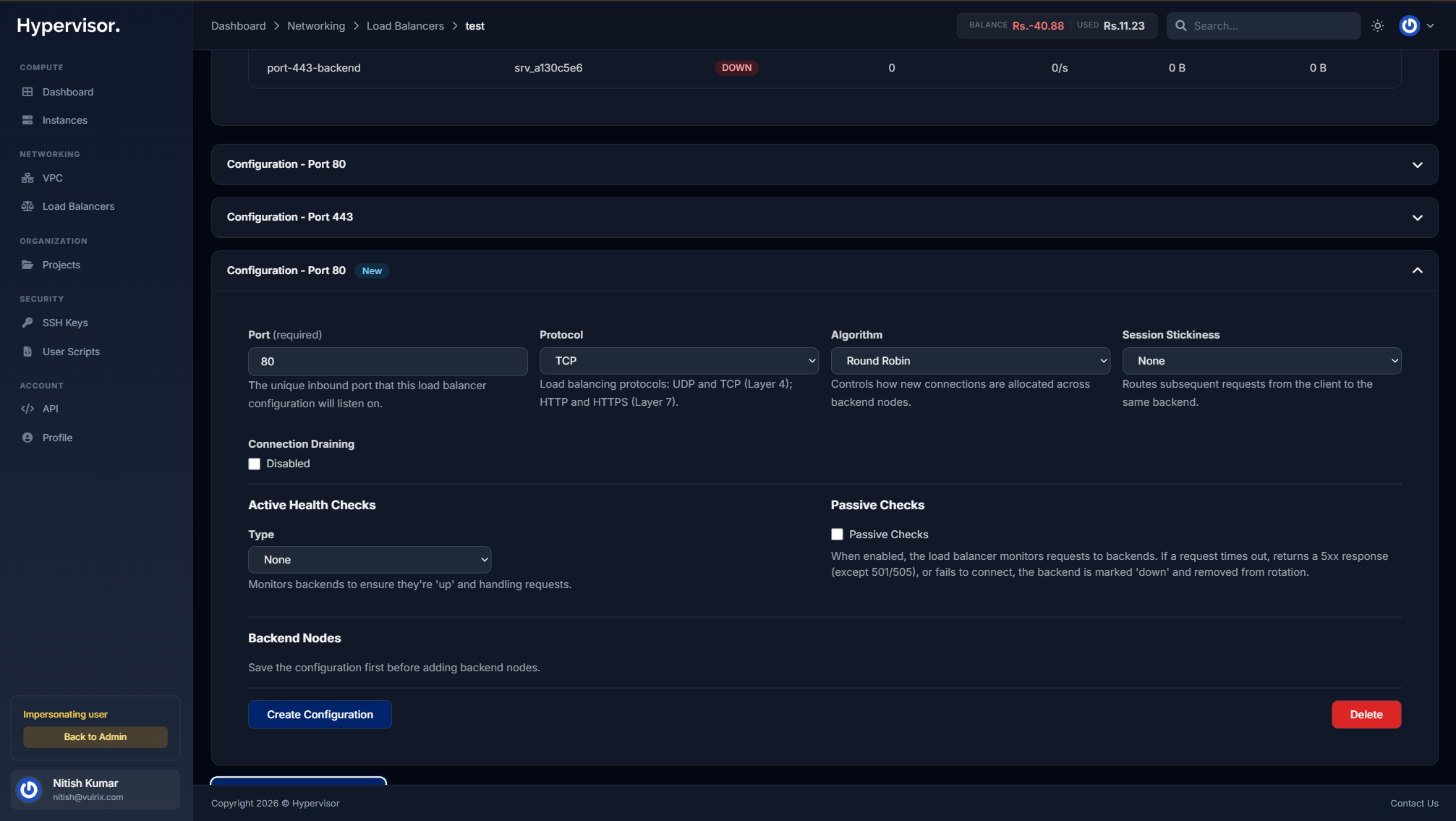

Adding a Configuration Block

- In the load balancer management page, click Add Configuration

- A new collapsible card appears for the configuration block

Screenshot: Load Balancer show page > Configuration block card showing port, protocol, algorithm, and session stickiness fields in a single row, with health checks and backend nodes sections below

Block Settings

Each configuration block contains the following settings:

- Port - The inbound port this configuration listens on (e.g., 80, 443, 8080). Each port can only be used by one configuration block.

- Protocol - The load balancing protocol:

TCP- Layer 4 load balancing (raw TCP)UDP- Layer 4 load balancing (UDP)HTTP- Layer 7 load balancing with HTTP awarenessHTTPS- Layer 7 with SSL termination

- Algorithm - How connections are distributed across backend nodes:

Round Robin- Distributes evenly across nodes in rotationLeast Connections- Sends to the node with fewest active connectionsSource IP- Sticks clients to the same node based on their source IP

- Session Stickiness - Ensures subsequent requests from a client reach the same backend:

None- No stickinessCookie- Uses an HTTP cookie (configurable cookie name and TTL)Source IP- Sticks based on client IP address

SSL Certificates

When the protocol is set to HTTPS, an SSL certificate dropdown appears. You can select from certificates you've uploaded in the Certificates section (see below).

Connection Draining

Enable connection draining to allow existing connections to complete gracefully before removing a backend node. Configure the Drain Timeout in seconds to control how long to wait.

Health Checks

Each configuration block supports both active and passive health checks:

Active Health Checks:

- Type - Choose between:

None- No active checksTCP Connect- Verifies the backend is accepting TCP connectionsHTTP Status- Sends an HTTP request and checks the response status

- Check Interval - How often to check (in seconds)

- Timeout - Maximum wait time for a response (in seconds)

- Unhealthy Threshold - Number of consecutive failures before marking a node as down

- Check Path - (HTTP only) The URL path to check (e.g.,

/health) - Check Port - (HTTP only, optional) A specific port to check, defaults to the target port

Passive Checks: When enabled, the load balancer monitors live traffic to backends. If a request times out, returns a 5xx response, or fails to connect, the backend is automatically marked as down and removed from rotation.

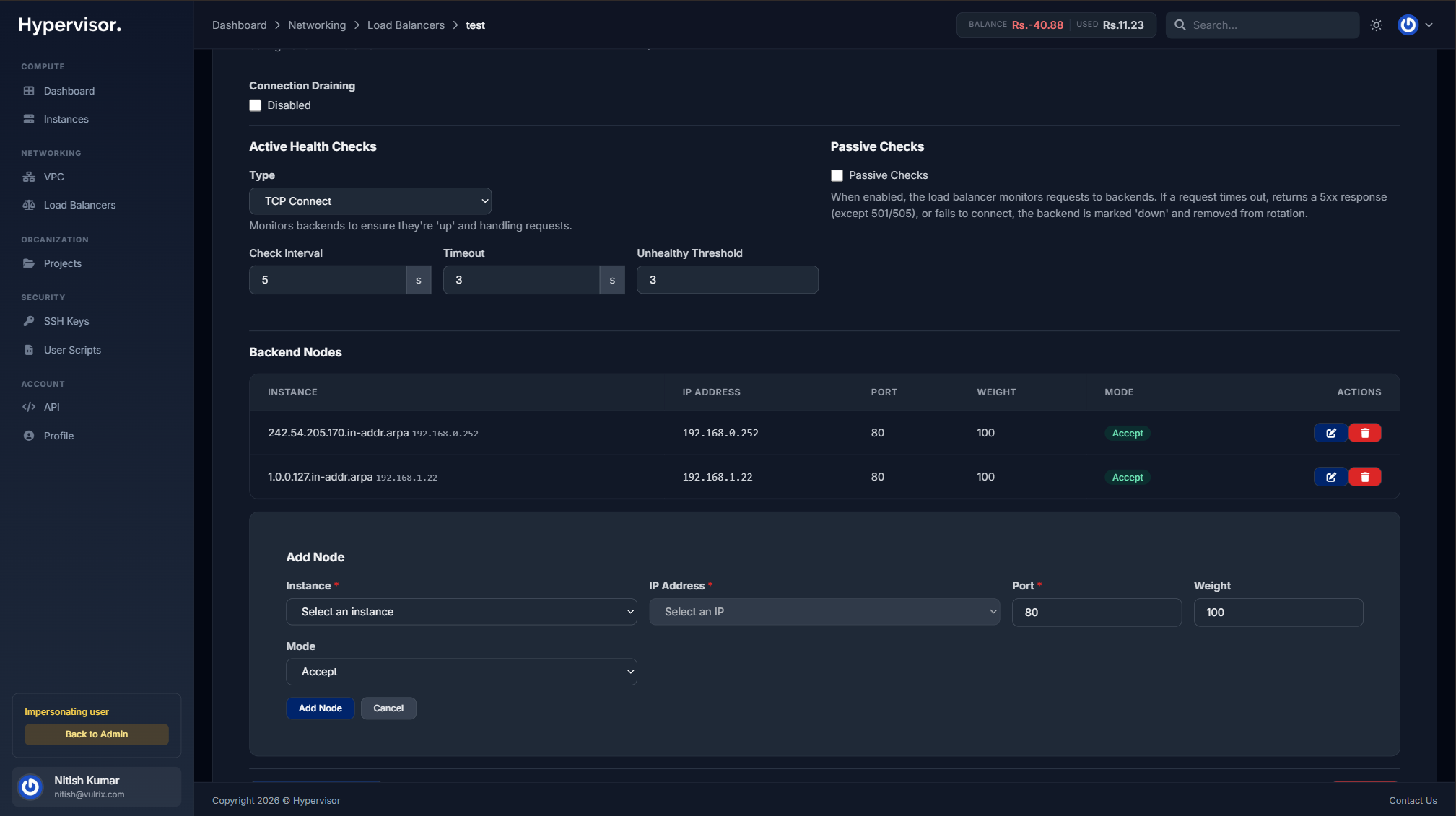

Backend Nodes

Backend nodes are the target instances that receive traffic within a configuration block.

- Click Add Node within the configuration block

- Configure:

- Instance - Select from your instances (VPC instances in VPC mode, or public instances in Public mode)

- IP Address - Auto-populated from the selected instance, or enter a custom IP

- Port - The port on the target instance (e.g., 8080)

- Weight - Relative weight for traffic distribution (higher = more traffic)

- Mode - Node availability: Active, Backup, or Drain

Screenshot: Load Balancer show page > Configuration block > Add Node form showing instance dropdown, IP address, port, weight, and mode fields

In VPC mode, instance targets resolve to their primary VPC IP. In Public mode, targets resolve to their primary public IP. You can also enter a custom IP address directly if the target is not managed by the panel.

Saving Configuration

After making changes to a configuration block, click Save to apply. The load balancer will validate and reload the HAProxy configuration automatically.

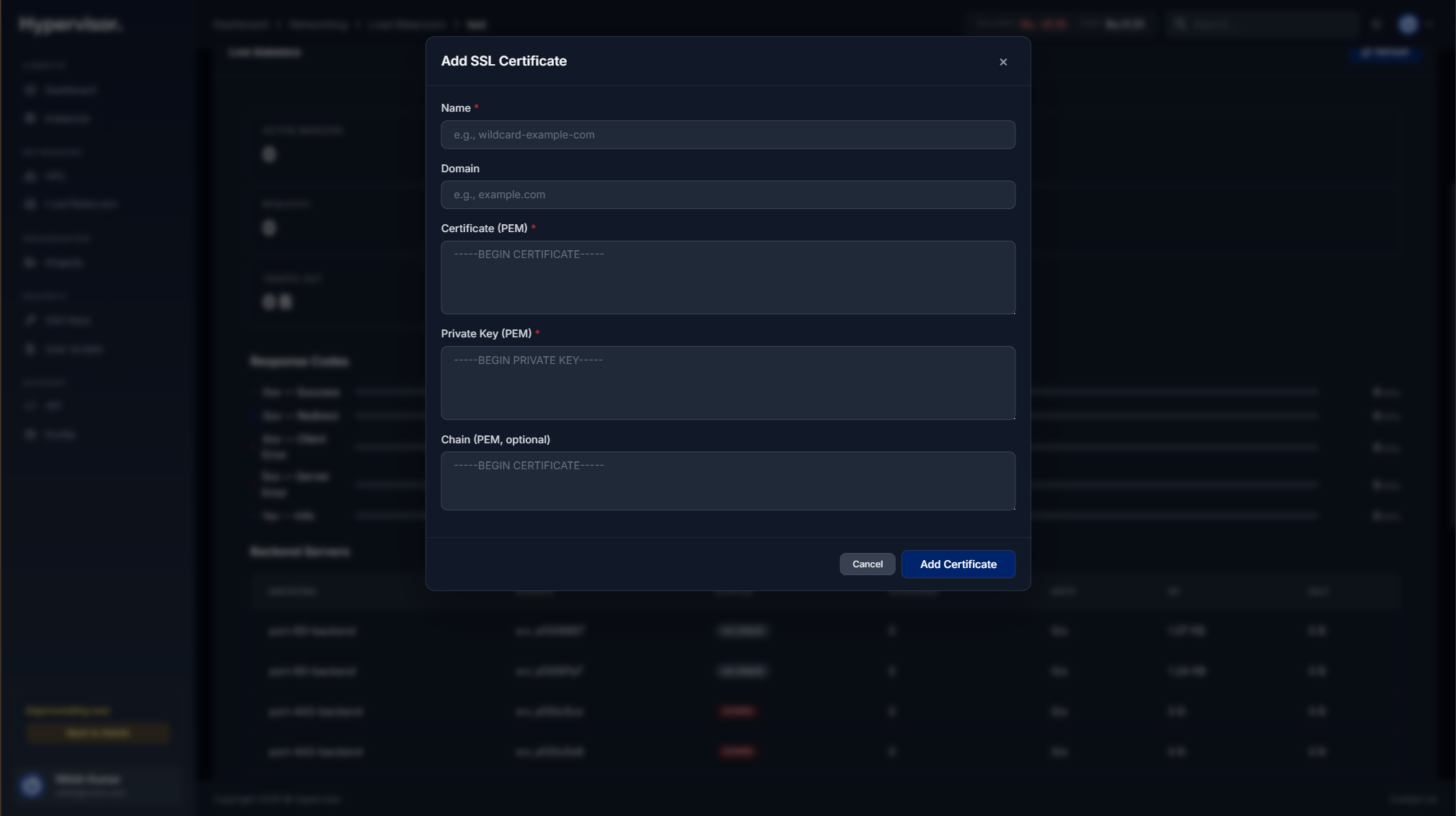

SSL Certificates

There are two ways to add SSL certificates to your load balancer: uploading your own certificate or using Let's Encrypt for automatic provisioning.

Uploading a Manual Certificate

- Scroll to the Certificates section

- Click Add Certificate

- Select Upload Certificate as the type

- Provide:

- Name - A label for this certificate (e.g., "example.com")

- Certificate - The full PEM certificate chain (including intermediates)

- Private Key - The private key for the certificate

Screenshot: Load Balancer show page > Certificates section > Add Certificate form with name, certificate textarea, and private key textarea

Let's Encrypt Auto-SSL

Let's Encrypt provides free, automatically provisioned and renewed SSL certificates. Instead of uploading your own certificate, the load balancer can obtain one for you.

Prerequisites:

- Your load balancer must have a public IP address

- Your domain(s) must point to the load balancer's public IP via an A record or CNAME

Setting up Let's Encrypt:

- Scroll to the Certificates section

- Click Add Certificate

- Select Let's Encrypt as the type

- Enter your domain name(s) -- use commas to separate multiple domains (e.g.,

example.com, www.example.com) - Click Create

The system will immediately check whether your domain's DNS resolves to the load balancer's public IP:

- If DNS is correct: Certificate issuance begins automatically

- If DNS is not yet pointing: The certificate enters a

Pending DNSstate and the system retries every 5 minutes for up to 24 hours

Before requesting a Let's Encrypt certificate, create an A record pointing your domain to the load balancer's public IP address. You can also use a CNAME that resolves to the load balancer's IP. DNS propagation can take a few minutes, so the periodic check ensures your certificate is issued as soon as DNS resolves.

Certificate Status Badges:

| Status | Meaning |

|---|---|

| Pending DNS (yellow) | Waiting for domain DNS to point to load balancer IP. Checked every 5 minutes. |

| Issuing (blue) | DNS verified, certificate is being provisioned. |

| Active (green) | Certificate is live and serving traffic. |

| Failed (red) | Issuance failed -- check that DNS is correct and try again. |

| Renewal Pending (orange) | Automatic renewal is in progress. |

Automatic Renewal:

Let's Encrypt certificates are valid for 90 days. The system automatically renews certificates that are within 30 days of expiration. You will receive an email notification when a certificate is renewed, or if renewal fails.

System-Managed Port 80 Frontend:

When you create your first Let's Encrypt certificate, the system automatically creates a dedicated port 80 configuration block. This frontend handles two things:

- ACME challenge verification -- Required for Let's Encrypt to validate domain ownership

- HTTP to HTTPS redirect -- All non-ACME traffic on port 80 is automatically redirected to HTTPS

This system-managed frontend is visible in your configuration list with a System Managed badge. It cannot be edited or deleted while Let's Encrypt certificates are active.

Do not create your own port 80 configuration block if you are using Let's Encrypt. The system-managed frontend handles this automatically. If you need custom port 80 behavior, use a manual certificate instead.

Path-Based Routing

Path-based routing allows you to direct traffic to different backends based on the request's hostname, URL path, or both. This enables advanced deployment patterns like microservice routing, canary deployments, and blue-green releases -- similar to AWS Application Load Balancer (ALB).

Creating Routing Rules

- Navigate to a configuration block on your load balancer

- In the Routing Rules section, click Add Routing Rule

- Configure the matching conditions and target backends

Matching Conditions

Each routing rule can match on host, path, or both:

- Host only -- Match requests by hostname (e.g.,

api.example.com) - Path only -- Match requests by URL path using a match type:

Path Begins With-- Matches URLs starting with a prefix (e.g.,/api/v2)Path Equals-- Matches an exact path (e.g.,/health)Path Regex-- Matches a regular expression pattern (e.g.,/api/v[0-9]+)

- Host + Path -- Match requests that satisfy both conditions (e.g.,

api.example.comAND path begins with/v2)

Routing rules are evaluated in order. The first rule that matches handles the request. If no rules match, traffic goes to the default backend configured on the configuration block.

Weighted Backends

Each routing rule can distribute traffic across multiple backends with configurable weights, enabling canary and blue-green deployment patterns.

- In the routing rule form, select a Backend and assign a Weight (1--100)

- Click Add Backend to add additional backends to the same rule

- Adjust weights to control traffic distribution

Example -- Canary deployment:

| Backend | Weight | Traffic Share |

|---|---|---|

| Production (v1.0) | 90 | ~90% of requests |

| Canary (v1.1) | 10 | ~10% of requests |

Example -- Blue-green cutover:

| Backend | Weight | Traffic Share |

|---|---|---|

| Blue (current) | 0 | No traffic |

| Green (new) | 100 | All traffic |

When a rule has only one backend, weight defaults to 100 and all matched traffic goes to that single backend.

Start with a low weight (e.g., 5) for the new version and gradually increase it while monitoring error rates and latency. If something goes wrong, set the new version's weight to 0 to instantly route all traffic back to the stable version.

On a Kubernetes cluster, you don't configure routing rules in the panel - you declare them in your Service manifest with the traffic-split or routing-rules annotation, and the in-cluster controller provisions the LB with weighted backends. See the Service LoadBalancer annotation reference for the full annotation surface.

Example: Microservice Routing

A common pattern is routing different API paths to different backend services:

| Rule | Host | Path Match | Backend |

|---|---|---|---|

| 1 | app.example.com | Begins with /api | API Servers |

| 2 | app.example.com | Begins with /static | CDN / Static Servers |

| 3 | app.example.com | (none) | Web App Servers |

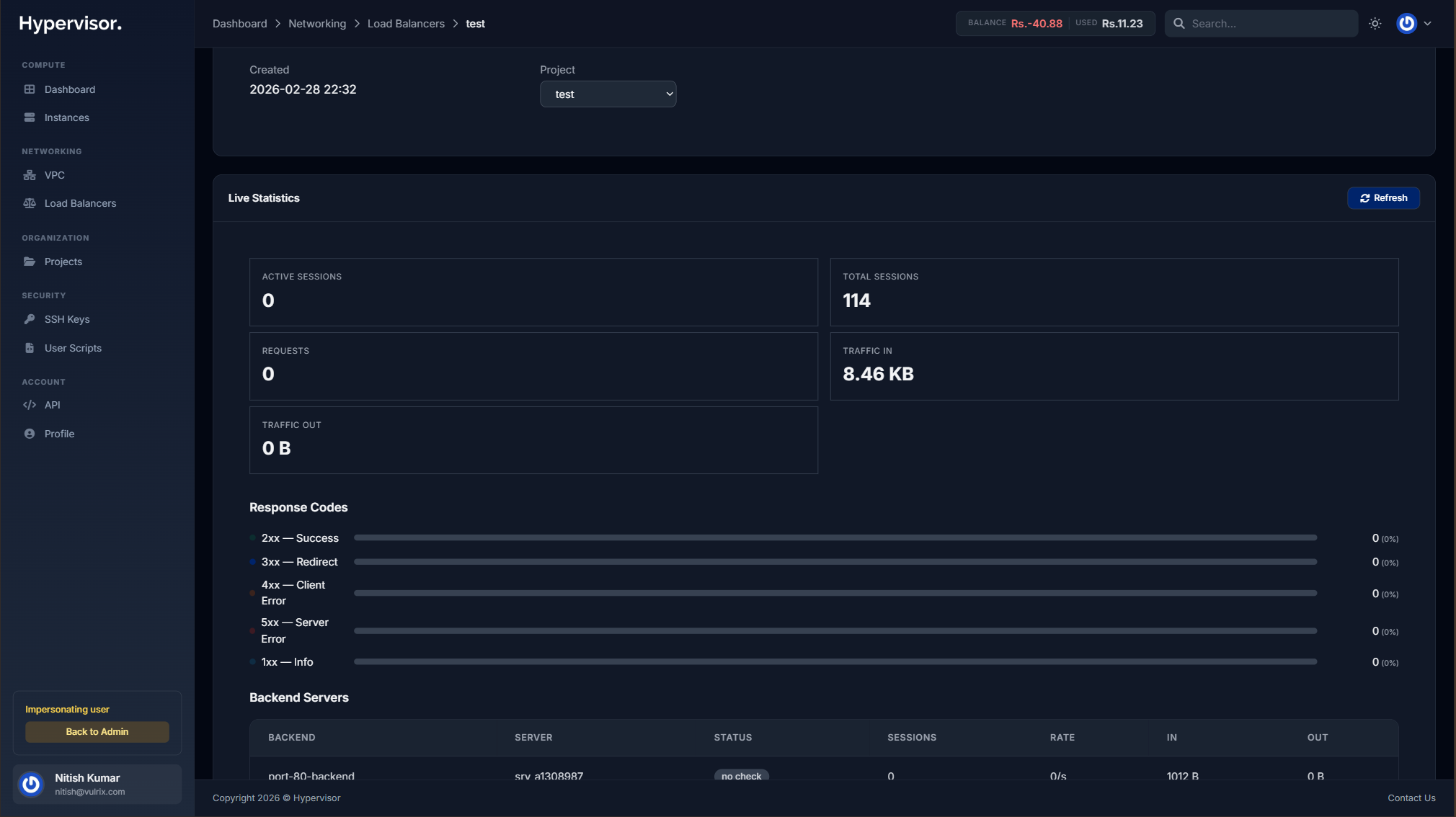

Monitoring

Statistics

The load balancer management page displays real-time statistics collected every 30 seconds:

- Session counts per frontend and backend

- Bytes in/out for traffic monitoring

- Backend health status for each target

- Connection rates and error counts

Screenshot: Load Balancer show page > Statistics section showing frontend/backend tables with session counts, bytes, status, and health

HA Status

If the hypervisor group has High Availability enabled, the load balancer's HA status is displayed:

- HA Heartbeat - Last heartbeat timestamp in the admin load balancers list

- HA Badge - Visual indicator on the admin load balancer detail page

Performance Metrics

When VictoriaMetrics is configured for the location, the load balancer collects detailed performance metrics and displays them as interactive time-series charts.

Available chart sections:

- Request Traffic -- Frontend request rate and active session count

- HTTP Response Codes -- 2xx, 4xx, and 5xx response rates

- Throughput -- Inbound and outbound data rates (KB/s)

- Backend Performance -- Response time, queue depth, and backend active sessions

- Backend Health -- Number of healthy (UP) backend servers over time

- System -- CPU and memory utilization

- Disk -- Disk usage percentage, read/write IOPS

- Network -- Interface-level RX/TX rates

Use the time range buttons (1m to 30d) to adjust the displayed window. Metrics refresh automatically every 30 seconds.

New load balancers automatically collect metrics when VictoriaMetrics is configured for the location. For existing load balancers deployed before metrics were enabled, an administrator can click Setup Metrics Agent on the load balancer detail page to install the metrics collector.

Troubleshooting

Let's Encrypt Certificate Stuck in "Pending DNS"

The system checks DNS every 5 minutes. If your certificate remains in Pending DNS:

- Verify your domain has an A record pointing to the load balancer's public IP

- Check DNS propagation using a tool like

nslookupor an online DNS checker - Ensure there are no conflicting CNAME records

- Wait for DNS propagation (can take up to 48 hours with some registrars)

If DNS is not resolved within 24 hours, the certificate status changes to Failed. You can delete the failed certificate and create a new one after fixing DNS.

Let's Encrypt Certificate Issuance Failed

Common causes:

- DNS not pointing to load balancer -- Verify your A record or CNAME

- Port 80 blocked -- The load balancer must be reachable on port 80 for domain verification

- Rate limits -- Let's Encrypt enforces rate limits (50 certificates per domain per week). Wait and retry if you've hit the limit.

Let's Encrypt Renewal Failed

You will receive an email notification if automatic renewal fails. The system retries daily. If renewal continues to fail:

- Ensure the domain still points to the load balancer's public IP

- Ensure port 80 is accessible

- Check that the load balancer is not suspended

Certificates are renewed 30 days before expiration, leaving ample time to resolve issues before the certificate expires.

Lifecycle Operations

From the load balancer management page, you can perform the following actions:

- Suspend -- Temporarily stops the load balancer (stops billing in hourly mode)

- Resume -- Restarts a suspended load balancer

- Destroy -- Permanently removes the load balancer and all its configuration

Destroying a load balancer is permanent. All configuration, certificates, and routing rules will be deleted. This action cannot be undone.